Description,

Given a list of directory info including directory path, and all the files with contents in this directory, you need to find out all the groups of duplicate files in the file system in terms of their paths.

A group of duplicate files consists of at least two files that have exactly the same content.

A single directory info string in the input list has the following format:

“root/d1/d2/…/dm f1.txt(f1_content) f2.txt(f2_content) … fn.txt(fn_content)”

It means there are n files (f1.txt, f2.txt … fn.txt with content f1_content, f2_content … fn_content, respectively) in directory root/d1/d2/…/dm. Note that n >= 1 and m >= 0. If m = 0, it means the directory is just the root directory.

The output is a list of group of duplicate file paths. For each group, it contains all the file paths of the files that have the same content. A file path is a string that has the following format:

“directory_path/file_name.txt”

Example 1:

Input:

["root/a 1.txt(abcd) 2.txt(efgh)", "root/c 3.txt(abcd)", "root/c/d 4.txt(efgh)", "root 4.txt(efgh)"]

Output:

[["root/a/2.txt","root/c/d/4.txt","root/4.txt"],["root/a/1.txt","root/c/3.txt"]]

Note:

No order is required for the final output.

You may assume the directory name, file name and file content only has letters and digits, and the length of file content is in the range of [1,50].

The number of files given is in the range of [1,20000].

You may assume no files or directories share the same name in the same directory.

You may assume each given directory info represents a unique directory. Directory path and file info are separated by a single blank space.

Follow-up beyond contest:

Imagine you are given a real file system, how will you search files? DFS or BFS?

If the file content is very large (GB level), how will you modify your solution?

If you can only read the file by 1kb each time, how will you modify your solution?

What is the time complexity of your modified solution? What is the most time-consuming part and memory consuming part of it? How to optimize?

How to make sure the duplicated files you find are not false positive?

HashMap solution

Though the content of [1 - 50] length can add up to 50 factorial keys, the description said the file count is in range of [1, 20000], which is not bad to use the file content as the key of hashmap.

Time complexity : O(n).

Space complexity : O(n).

class Solution(object):

def findDuplicate(self, paths):

"""

:type paths: List[str]

:rtype: List[List[str]]

"""

ret = []

hm = dict()

self.buildHashMap(paths, hm)

return [v for v in hm.itervalues() if len(v)>1]

def buildHashMap(self, paths, hm):

for s in paths:

tmpList = s.split()

folder = tmpList[0]

for i in xrange(1, len(tmpList)):

fileName, fileContent = tmpList[i].split('(')

fileContent = fileContent[:len(fileContent)-1]

group = hm.setdefault(fileContent, [])

group.append(folder + '/' + fileName)

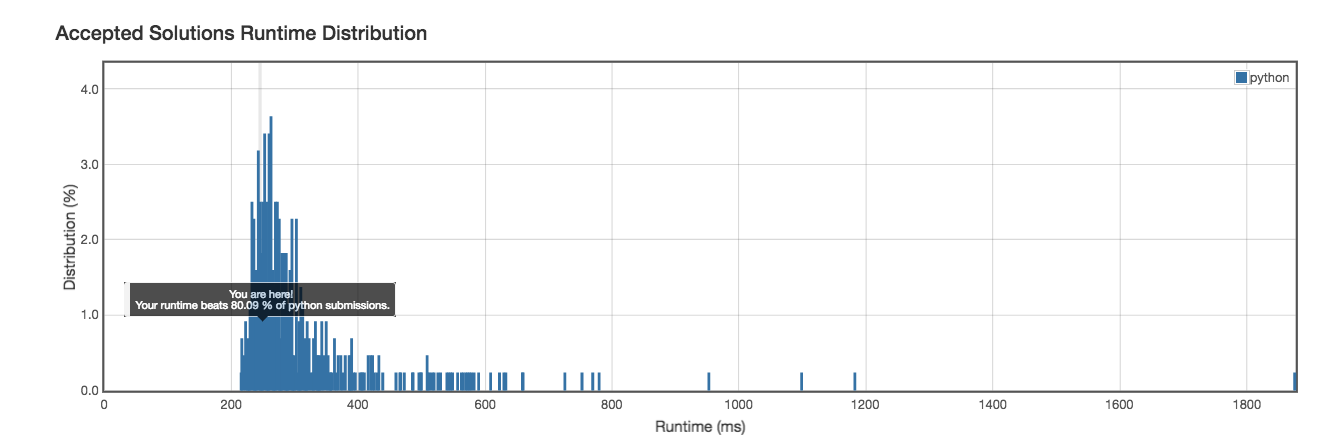

Runtime: 246ms

Runtime: 246ms

Follow-up beyond contest:

Imagine you are given a real file system, how will you search files? DFS or BFS?

Answer: Comparing BFS and DFS, the big advantage of DFS is that it has much lower memory requirements than BFS, because it’s not necessary to store all of the child pointers at each level. Depending on the data and what you are looking for, either DFS or BFS could be advantageous. In this specific case, I prefer DFS.

If the file content is very large (GB level), how will you modify your solution?

Answer: use file size + file’s MD5. In worst case, we need to compare the files byte by byte.

If you can only read the file by 1kb each time, how will you modify your solution?

Answer: it is not an issue since majority of our files can be differentiate with size + MD5.

What is the time complexity of your modified solution? What is the most time-consuming part and memory consuming part of it? How to optimize?

Answer: O(n^2) in worst case scenario.

How to make sure the duplicated files you find are not false positive?